The Identity-Action Loop: How Systems Evolve Power Without Losing Trust

Revisiting the Core Challenge

In Part I of this series on Identity, we framed the central problem like this: optimization breaks down in open systems because it assumes a fixed identity. It can make a system better at being what it already is, but it struggles when the environment forces the system to become something else.

Identity Architecture is one answer to that. It lets a system adapt its identity under structural stress while still preserving continuity, largely by separating core identity from surface identity.

That move raises the next question: if identity can change, what keeps the system from drifting, fragmenting, or quietly becoming unrecognizable?

The short answer is coherence. However, coherence only becomes useful once we stop thinking in purely linear, top-down control models.

Understanding Coherence: Beyond “More Control”

It’s tempting to treat coherence as tighter control: stricter rules, stronger alignment, more centralized enforcement. The problem is that this approach tends to freeze identity in place. It can keep a system legible, but often at the cost of making it brittle.

Coherence points at something slightly different. It’s not “don’t change.” It’s: change in a way that stays explainable, reviewable, and trustable. In other words, coherence makes evolution navigable.

A handhold that will matter later: coherence is less about preventing change and more about preventing silent change.

Three Meanings of “Identity” (So We Don’t Talk Past Each Other)

One reason identity discussions get slippery is that the word “identity” does multiple jobs at once. In this essay, it helps to keep three layers distinct:

Core identity: the system’s foundational commitments and legitimacy constraints—the things it claims it won’t violate without becoming a different system.

Surface identity: the visible operating shape—roles, configurations, permissions, operating modes, delegated authorities.

Identity state: the system’s current standing in the eyes of the system around it—trust tier, reputation weight, clearance level, risk budget, confidence budget. This tends to be conditional and updateable.

When I say “identity evolves,” I usually mean surface identity and identity state are changing. Core identity changes too, but less frequently and (ideally) only under explicit, heavyweight procedures.

What Coherent Identity Evolution Looks Like

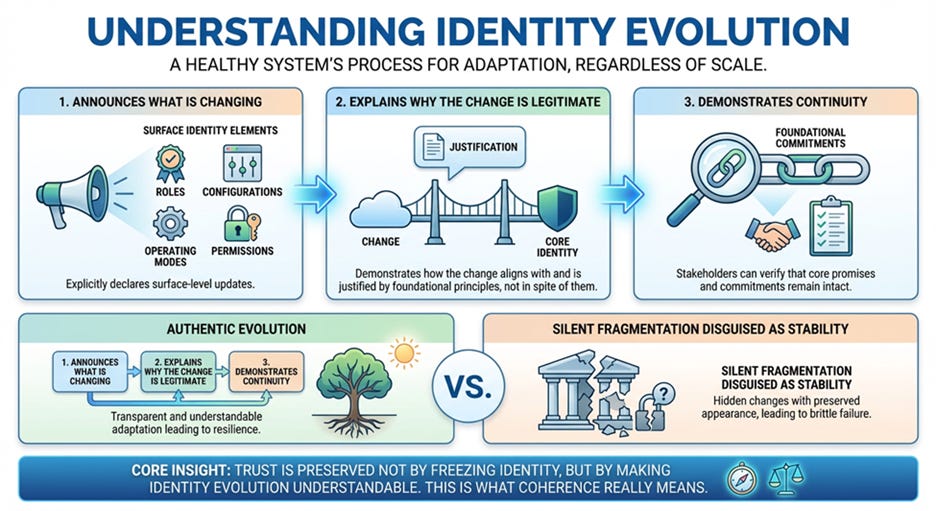

When a system evolves in a healthy way, the pattern is surprisingly consistent, whether the system is a team, an institution, or an automated agent:

It makes the change explicit

Usually this is a surface identity update: roles, operating mode, configuration, permission scope, decision rights.It gives a legitimacy story

Not “we felt like it,” but “here’s how this change follows from our core identity and constraints.”It leaves a trail of continuity

So other stakeholders can verify that foundational commitments still hold—or can see precisely where they were revised.

A practical way to say it: coherence is the difference between evolution and drift. Drift happens when identity changes, but nobody can clearly say what changed, why it was allowed, and how it remains accountable.

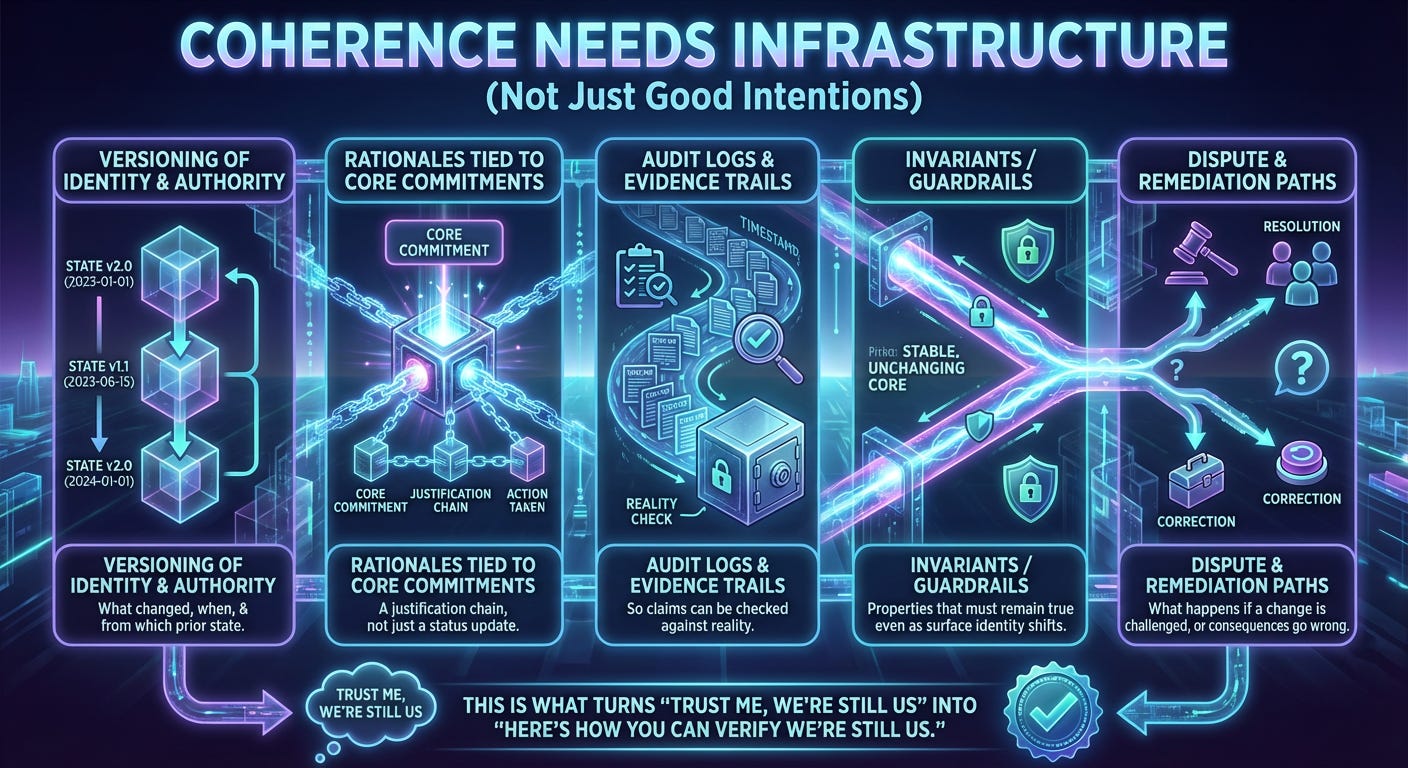

Coherence Needs Infrastructure (Not Just Good Intentions)

Coherence is partly cultural, but in complex systems it usually ends up being infrastructural. The minimum viable “coherence infrastructure” looks like:

Versioning of identity and authority (what changed, when, and from which prior state)

Rationales tied to core commitments (a justification chain, not just a status update)

Audit logs and evidence trails (so claims can be checked against reality)

Invariants / guardrails (properties that must remain true even as surface identity shifts)

Dispute and remediation paths (what happens if a change is challenged, or consequences go wrong)

This is what turns “trust me, we’re still us” into “here’s how you can verify we’re still us.” How does this work in real world systems?

The Limits of the Linear Stack Model

Systems are often described with a tidy, linear stack:

Identity → Permission → Data → Models → Decisions → Actions

That’s a helpful diagram for control flow. But it can be misleading as a model of how systems behave over time.

In real systems, actions don’t just come out at the end. They land in the world, and the world pushes back. Actions generate new data, reshape trust, invite scrutiny, trigger regulation, create liability, and—over time—change what the system is allowed to do next.

Another handhold: actions are where irreversibility enters. If actions truly sat “at the bottom,” they’d be downstream and inconsequential. In practice they’re where the system commits, and commitments have consequences.

So the better mental model isn’t a hierarchy. It’s a loop.

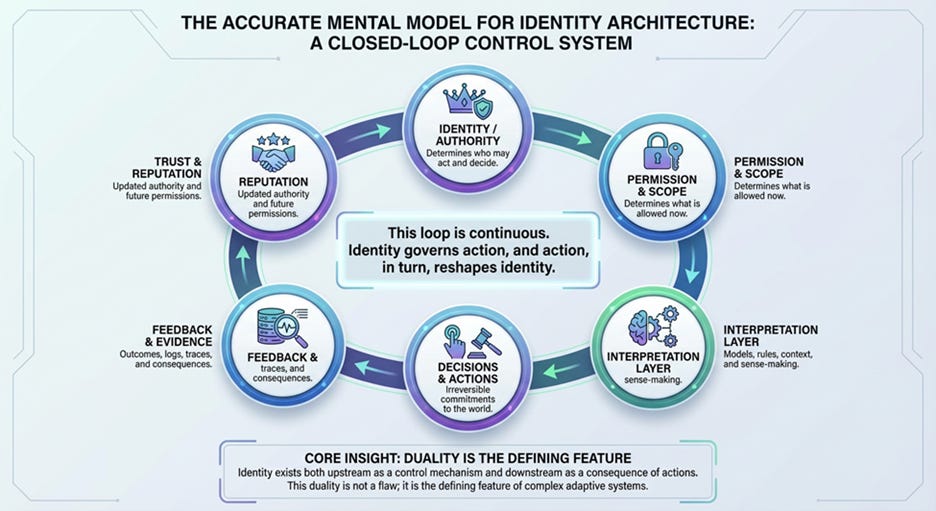

The Closed-Loop System Model

A more accurate model for Identity Architecture is a closed-loop control system:

Identity / Authority: who is recognized as able to act, and at what level

Permission & Scope: what is allowed right now

Interpretation Layer: models, rules, context, sense-making

Decisions & Actions: commitments that can’t be un-done cleanly

Feedback & Evidence: outcomes, logs, traces, downstream effects

Trust & Reputation: updated standing that expands or constrains future authority

The loop keeps running. Identity shapes action; action reshapes identity.

A simple way to hold it in your head: identity is both a cause and an effect in complex systems. That’s not a bug—it’s the defining property.

A Concrete Example (Lending)

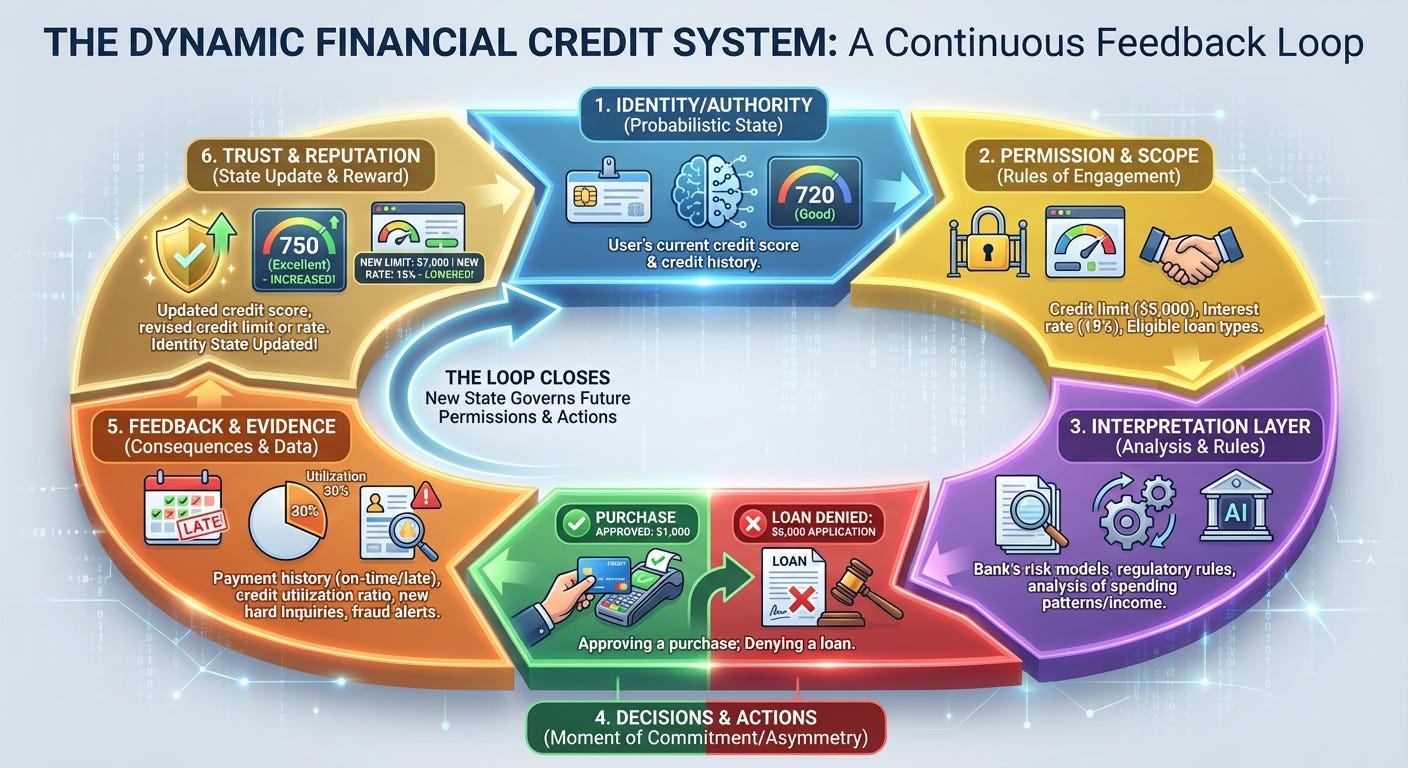

Take a lending platform:

A user starts with a certain identity state (say: verified, medium trust tier).

That identity state unlocks a permission scope (loan size caps, interest rates, faster approvals).

The platform’s interpretation layer evaluates new applications (income, history, fraud signals, model predictions).

The system approves or declines loans—those approvals are real commitments with legal and financial consequences.

Repayment behavior, disputes, charge-offs, and audits produce feedback and evidence.

Over time, the user’s trust tier shifts: they earn higher limits, or lose privileges, or get pushed into manual review.

Notice what changed: not just “data,” and not just “model outputs.” What changes is what the system is willing to let happen next. That’s identity in its stateful, operational form.

Identity as a State Variable (Not a Static Label)

Many system failures start with treating identity as a fixed token: a credential you “have” once and then carry around unchanged.

More advanced systems treat identity as something closer to a state variable:

probabilistic and conditional,

context-dependent,

updated by evidence,

reputation-weighted,

and sometimes revocable.

We already accept this in practice: credit scores, medical licenses, API trust tiers, security clearances, model confidence budgets, agent tool-use allowances. These are all dynamic identity states that rise and fall based on behavior and outcomes.

A handhold that ties back to coherence: if identity is updateable, then the update mechanism becomes part of the system’s morality and governance—not just its plumbing.

Locating Asymmetry: Power Shows Up at the Moment of Commitment

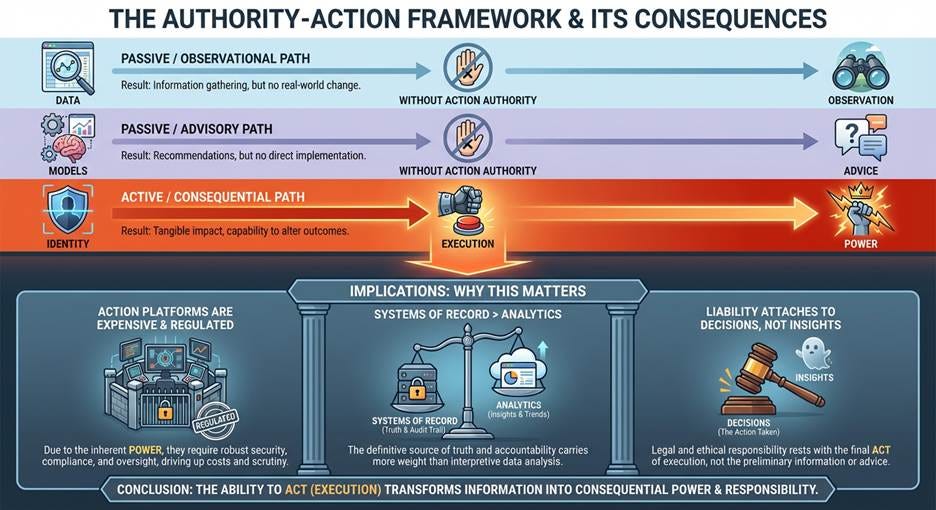

Once you see the loop clearly, one implication becomes hard to ignore: particularly in the context of artificial intelligence. Asymmetry does not reside in data, models, insights, or dashboards. It is expressed at the moment of commitment. When a system executes a trade, approves a loan, escalates a patient, blocks a transaction, dispatches a robot, or triggers a legal or financial obligation. This is the point where power becomes real.

So:

Data without action authority is observation.

Models without action authority are advice.

Identity plus execution is power.

This is why action platforms are expensive and regulated, why systems of record matter more than analytics, and why liability attaches to decisions rather than insights.

Coherence as the Integrative Layer

At this point we can say the integration more plainly:

Identity Architecture answers: when and how may this system change what it is allowed to be?

Coherence Infrastructure answers: how do we make those changes legible, reviewable, and trustworthy inside a larger ecosystem?

One way to summarize: identity can evolve locally, but coherence is what prevents local evolution from becoming global fragmentation.

This separation is what makes aggressive adaptation possible without collapse. It supports decentralized evolution without losing continuity. It’s a path toward durability without rigid control.

Why this Matters Before Talking About AI

AI makes these dynamics impossible to ignore, but it didn’t create them. What AI does is push more “interpretation” and more “initiative” into the system—often at speed, and often with ambiguous boundaries between suggestion and execution.

That means identity, authority, and action can’t remain background assumptions. They become first-class architecture.

In the next section, we’ll apply Identity Architecture and coherence directly to AI systems—why many current approaches stall out, and what changes when authority, permission, and consequence sit at the center of the design.

The future won’t belong to the systems that optimize most aggressively. It will belong to the systems that know when to change, can do so coherently, and remain accountable for what they make happen.